PySpark

from pyspark.sql.functions import mean

df.select(mean(df.column1)).show()Pandas

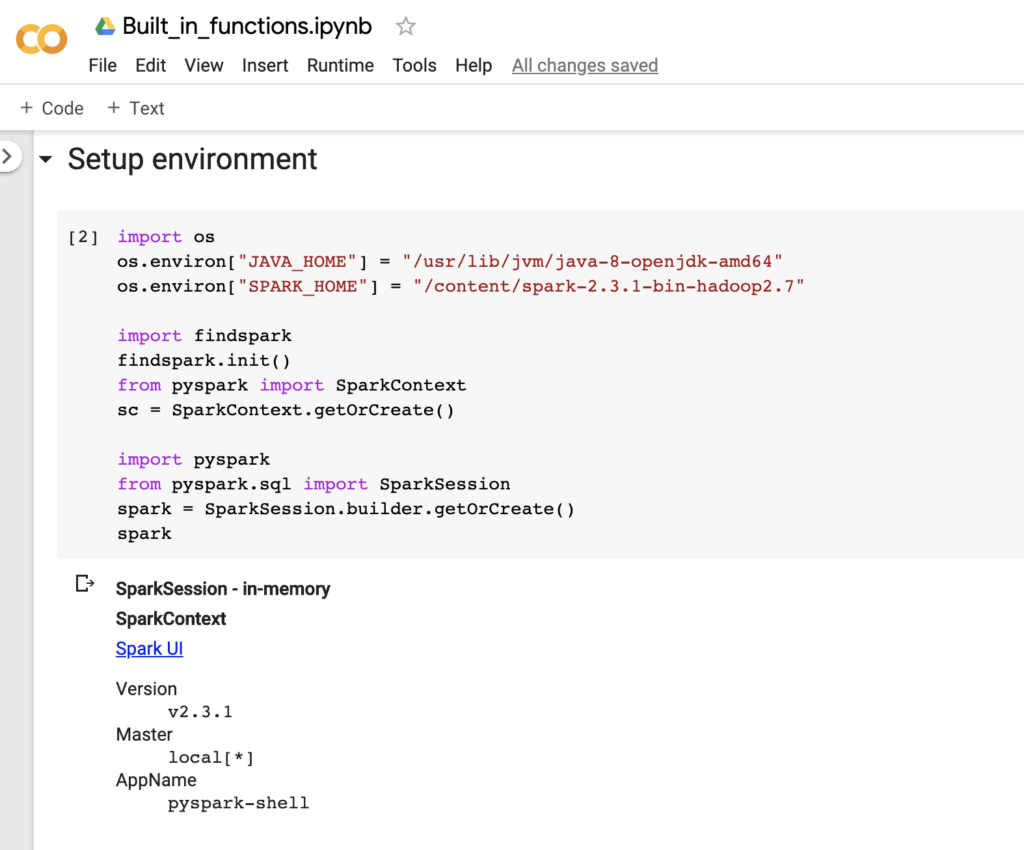

df.column1.mean()All code can be downloaded below and you can run it complete for free in Google Colab.

from pyspark.sql import functions

print(dir(functions))

- AutoBatchedSerializer

- Column

- DataFrame

- DataType

- PandasUDFType

- PickleSerializer

- PythonEvalType

- SparkContext

- StringType

- ascii

- asin

- atan

- atan2

- basse64

- bitwiseNOT

- blacklist

- UserDefinedFunction

- abs

- acos

- add_onths

- approxCountDistinct

- apprrox_count_distinct

- array

- array_containsss

- asc

- broadcast

- bround

- cbrt

- ceil

- coalesce

- col

- collect_list

- collect_set

- +188 more

So lets start to use the Spark functions:

from pyspark.sql.functions import lower, upper, substringIf you need any helper for any functions you can enter

help(substring)If you want to display the output in Upper you only need to select

rc.select(upper(col('Primary Type'))).show(5)Show the oldest date and the most recent date

rc.select(min(col('Date')),max(col('Date'))).show(1)Date

What is 3 days earlier that the oldest date and 3 days later than the most recent date?

from pyspark.sql.functions import date_sub, date_add

rc.select(date_sub(min(col('Date')),3),date_add(max(col('Date')),3)).show(1)

You can download our example here

Daniel Jordan

Fellow Consulting AG

If you have any question please contact me on this channels: